Break down Buffer-of-Thought — A way to understand human mind

We learn something by learning many things

Introduction

With the rise of Large Language Models (LLMs) like Gemini, Claude, and GPT, we now have access to powerful tools that can significantly enhance our work lives. For example, with a simple question, GPT-4 can provide multiple solutions to a coding challenge, offering a range of options to improve project efficiency.

To further enhance the capabilities of LLMs, we can explore techniques such as quantization, pruning, distillation, etc. Prompt engineering is a technique allowing LLMs to generate results that fit our interests.

It is a new discipline that optimizes prompts for efficient use of language models (LMs) in various applications and research topics. It involves designing robust techniques, interacting with LLMs, and improving safety and capabilities.

There are many approaches to creating a perfect prompt for our work, for instance, few-shot, chain-of-thought, etc. One of which may include creating a role for the LLM and asking it to do the task. For instance, it would be something like “You are an expert in fashion. Your task is to suggest for me something since I am doing something. In details, I want [something that you like]”. We give it a role; we ask it to do the task in the way that we want to.

In this writing, I want to introduce a technique called Buffer of Thoughts (BoT), a super-smart thinking tool that helps large language models (LLMs) learn and improve faster, making them more accurate, efficient, and reliable in their decision-making. The secret behind its “super-smart” includes its meta-buffer and buffer-manager, which allow its to store an abstraction of a problem. The structure of the writing is as follows:

A Brief Summary: We introduce the concept of Buffer of Thoughts.

Into Details: We discuss BoT’s architecture and some of its achievements.

Conclusion: We summarize what we have achieved and discuss further improvement.

A Brief Summary

Imagine having a super-smart assistant that can help us solve complex problems and make better decisions. That’s what Buffer of Thoughts (BoT) is all about.

There are 2 big words of the BoT that make it stand out: Meta-Buffer and Buffer-Manager. The former relates to thoughts or higher-level guidelines that we have when we solve many problems and apply them to similar problems. Latter indicates a way to manage those thoughts, generate new templates or adjust the existing templates.

When we solve problems, such as doing math, playing piano, we form a way that we can perform best to solve those problems. That “way” is considered as a template for solving the problems that we have faced that can be assumed to solve relevant problems.

We can assume to play “Twinkle twinkle little star” after we can play the “Happy Birthday”. The way we solve may change through time, we can learn new ways to play that song, or to improve our way of playing that song. The way we “update” our “way” is considered as the Buffer-Manager.

By storing a collection of clever ideas and strategies, BoT helps computers tackle tough challenges more efficiently and accurately. This innovative system is like a mental library that draws upon a wealth of knowledge to find the best solution to a problem. The result is a more intelligent and reliable computer that can help us make better decisions and solve complex problems with ease.

Into Details

BoT allows us to solve the problem with a thought-template for problems that we have already solved, called Meta-Buffer. However, we need something that we can understand a problem that we are facing (”do we know it?”, “what category does it belong to?”, etc.). A problem-distiller is introduced to extract critical task-specific information along with relevant constraints. The distilled information will be then searched in the Meta-Buffer to retrieve the most relevant template. By time, we would change or learn new ways to solve those problems; hence, new forms of thought templates would be presented. The Buffer-Manager is in charge of managing that.

Problem Distiller

LLMs usually suffer several issues during the reasoning stage, including gathering important information, recognizing potential elements, and executing reasoning. The problem-distiller sees a problem by extracting and recognizing key patterns. The patterns focus on:

Essential parameters and variables for problem-solving.

The objectives of the input tasks and their corresponding constraints.

The gathered information is reorganized into a clearer format that can be translated into higher concepts or structures.

For instance, if we do a basic addition, “How many animals does the farm have when there are 3 cows and 5 chickens?” The problem solver will translate it into the “a + b = c” problem and retrieve a relevant template to solve the case.

Meta-Buffer

This part is quite intriguing since it mimics human behaviors in solving problems by identifying all their problems into some clusters. In detail, we can view it as a library storing many thought-templates used to solve many types of problems. All thought templates are stored in a meta-buffer; it would be controlled by the buffer manager.

A relevant template is retrieved by measuring the proximity of the distilled problem to all the templates in the Meta-Buffer. A template that has the highest similarity score is considered to be relevant to the distilled problem.

Now, we would ask ourselves, What if the task is too new to the Meta-Buffer? Fortunately, BoT posed 2 situations:

Successfully retrieve the relevant template.

The task is identified as a new task.

The distilled response will be sent to a meta-reasoner, which is in charge of the reasoning task and has defined several reasoning structures, to answer the problem. In case (1) happens, it uses the provided thought template to instantiate for the given problem.

Buffer-Manager

The Buffer-Manager summarizes the high-level principles and ideas that emerge from each problem-solving procedure. It apply specific solutions to several issues and store critical knowledge as thought-templates in the meta buffer.

Template Distillation: To extract a general thought template, BoT proposes a three-step approach:

Core task summarization: identifying and describing basic types and core challenges of problems.

Solution steps description: summarize the general steps for solving a problem;

General answering template: based on the above analysis, propose a solution template or approach that can be widely applied to similar problems.

Surprisingly, the template distillation is able to capture a stable generalization by its ability to generate thought templates, including in-task and cross-task examples.

Dynamic Update of Meta-Buffer: This is a crucial step that follows template distillation, where the distilled template is evaluated for integration into the meta-buffer.

This process ensures that the meta-buffer remains efficient and effective by selectively updating it with new, informative thoughts while avoiding redundancy.

By calculating the similarity between new and existing templates, the meta-buffer is updated only when necessary, reducing computational burden and maintaining its lightweight property. This strategic approach enables the meta-buffer to provide valuable insights while optimizing its performance.

Evaluation

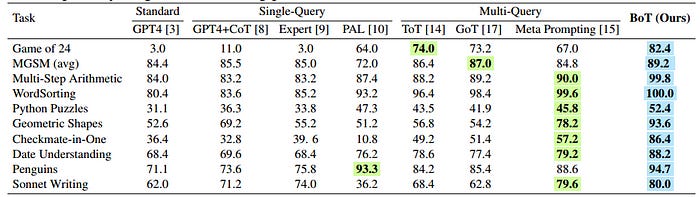

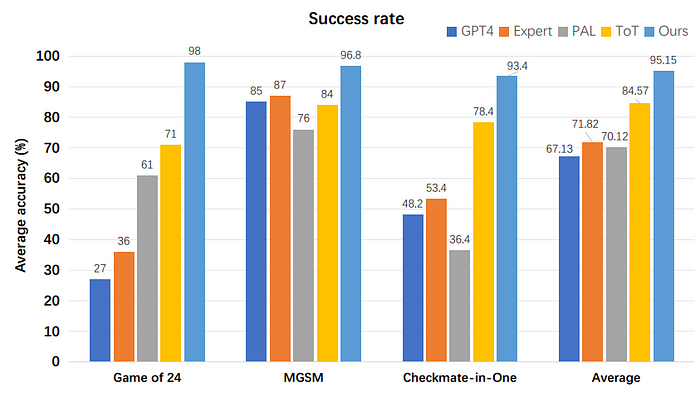

The proposed BoT method demonstrates significant improvements in both accuracy and efficiency for complex reasoning tasks.

By leveraging thought-templates and adaptively instantiating an optimal reasoning structure, BoT outperforms previous methods while reducing the time and cost required for complex reasoning:

Accuracy: BoT outperforms previous prompting methods, achieving significant accuracy improvements (up to 79.4%) in complex reasoning tasks like Game of 24 and Checkmate-in-One.

Efficiency: BoT achieves comparable reasoning time to single-query methods and is considerably faster than conventional multi-query methods, requiring only 12% of the cost on average.

Reasoning Structure: BoT leverages historical insights and informative guidelines from thought-templates to adaptively instantiate an optimal reasoning structure for complex problems.

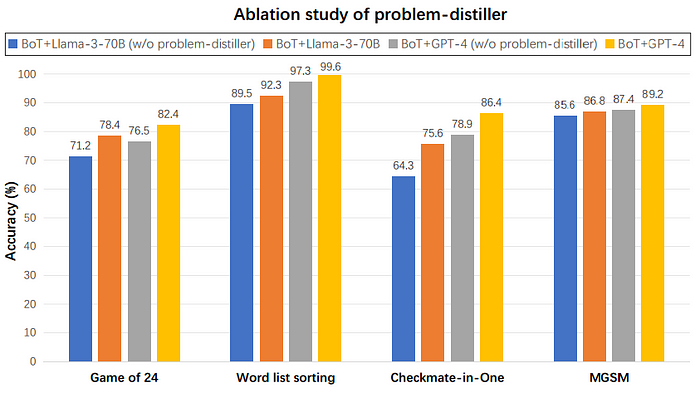

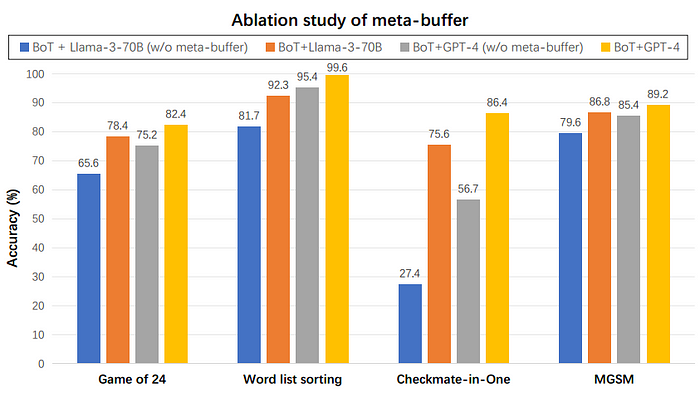

Additionally, in the ablation study, each component is proved to be efficient and together make BoT robust.

The problem-distiller plays a crucial role in improving the accuracy of LLMs, particularly in complex problems where extracting key information and constraints is challenging. Its impact is more pronounced in tasks like Game of 24 and Checkmate-in-One, highlighting its importance in facilitating effective problem-solving.

The meta-buffer plays a crucial role in enhancing the performance of LLMs, particularly in complex reasoning tasks. Its absence leads to a significant decline in performance, highlighting its importance in facilitating effective problem-solving in challenging benchmarks.

Conclusion

In this writing, we introduced the concept of buffer of thoughts (BoT), a revolutionary technique that enhances the capabilities of large language models (LLMs) by enabling them to learn and improve faster. By leveraging its meta-buffer and buffer-manager, BoT allows LLMs to store an abstraction of a problem, making them more accurate, efficient, and reliable in their decision-making. Through our discussion, we explored the architecture of BoT and highlighted some of its notable achievements.

Despite its improvements in accuracy and efficiency, the method’s ability to address problems requiring human-like creativity is limited, as these problems often don’t rely on specific thought-templates. The method’s performance can also be impacted by the quality of the model used to initialize the meta-buffer, which can result in suboptimal thought-templates. To address these limitations, future directions for the method include integrating external resources to build an open-domain system and optimizing the distillation of thought-templates to enhance their quality for complex tasks.

As we look to the future, there are several directions that we can take to further improve and expand the capabilities of Buffer of Thoughts. Some potential areas of research include:

Integrating BoT with other techniques, such as quantization and pruning, to further enhance the efficiency and accuracy of LLMs.

Exploring the applications of BoT in various domains, such as healthcare, finance, and education.

Developing new architectures and algorithms that can leverage the capabilities of BoT.

Investigating the potential of BoT to improve the explainability and transparency of LLMs.

By pursuing these directions, we can unlock the full potential of Buffer of Thoughts and create a new generation of LLMs that are more intelligent, efficient, and reliable. As we continue to push the boundaries of what is possible with LLMs, we are excited to see the impact that Buffer of Thoughts will have on the field of natural language processing and beyond.

Thank you for reading this article; I hope it added something to your knowledge bank! Just before you leave:

👉 Be sure to clap and follow me. It would be a great motivation for me.

👉Follow me: LinkedIn | Github | Substack

Appendix

An exemplar is a template of examples that presents a unique structure for conveying information. For instance, consider a scenario where we have 100 examples illustrating how a person would feel after hearing a sentence. Each example would follow a standardized template: “If [sentence], then he/she would feel [emotion].” This structured approach enables easier learning and parsing of the generated results, as the consistent format facilitates understanding and analysis of the emotional responses.

Overall, we can see that this architecture depends on thought-templates to work well, which means that it assumes there must be large enough problems to feed a LLM so that it can be robust enough to solve as many problems as possible. In our case, human-beings, examples are doing homework assignments for a subject, studying for exams, or practicing problem-solving exercises, where the repetition and variation of problems help to reinforce learning and improve problem-solving skills.”